Based on my conversations with several people, I can see that there is a growing understanding of what is possible with AI within the enterprise. Slowly, we are beginning to understand the ideas behind context engineering and how bringing enterprise context to AI models enables meaningful AI products and features. We are now somewhat able to imagine what an MVP could look like and how, in theory, it benefits users—for example, a conversational agent over recruitment data, or for productivity improvements for a software support team.

However, the question of training one’s own model on enterprise data keeps coming up. While one may eventually need to train and create a proprietary model, a lot is possible through context engineering alone, and this should not prevent teams from building and shipping products.So it is important now to look ahead at the easily identifiable issues that will need to be addressed in these product initiatives. These should/can be anticipated and planned for, rather than becoming blockers at rollout time.

- Mitigate new risk surfaces: This typically involves questions around whether to send enterprise data over the open internet to general-purpose AI services such as OpenAI or Claude. These are valid, but they are also being addressed quickly. We now have sandboxed services from AWS (Bedrock) and Azure (e.g., Azure OpenAI Service). These help address security-related issues such as data residency, prompt and data retention guarantees, prevention of data egress, and ensuring no model retention.

- Plan-for and factor-in adoption challenges: AI products and features must be deliberately used by enterprise users to create value. These are users who are accustomed to working without such tools and have built systems and workflows that already work for them. This represents a significant change and requires classic change management. We all know this is not easy. However, low usage of AI features should not be labeled as a failure of AI itself. New AI initiatives must be explicit about this reality and plan for it. For example, while some users have moved almost completely away from Googling toward ChatGPT-like tools, they are still a small minority. Daily active usage of general-purpose AI chatbots remains quite low (see Ben Evans’ analysis). Adoption of enterprise systems is a long process—but it is also a deliberate one that must be planned and executed.

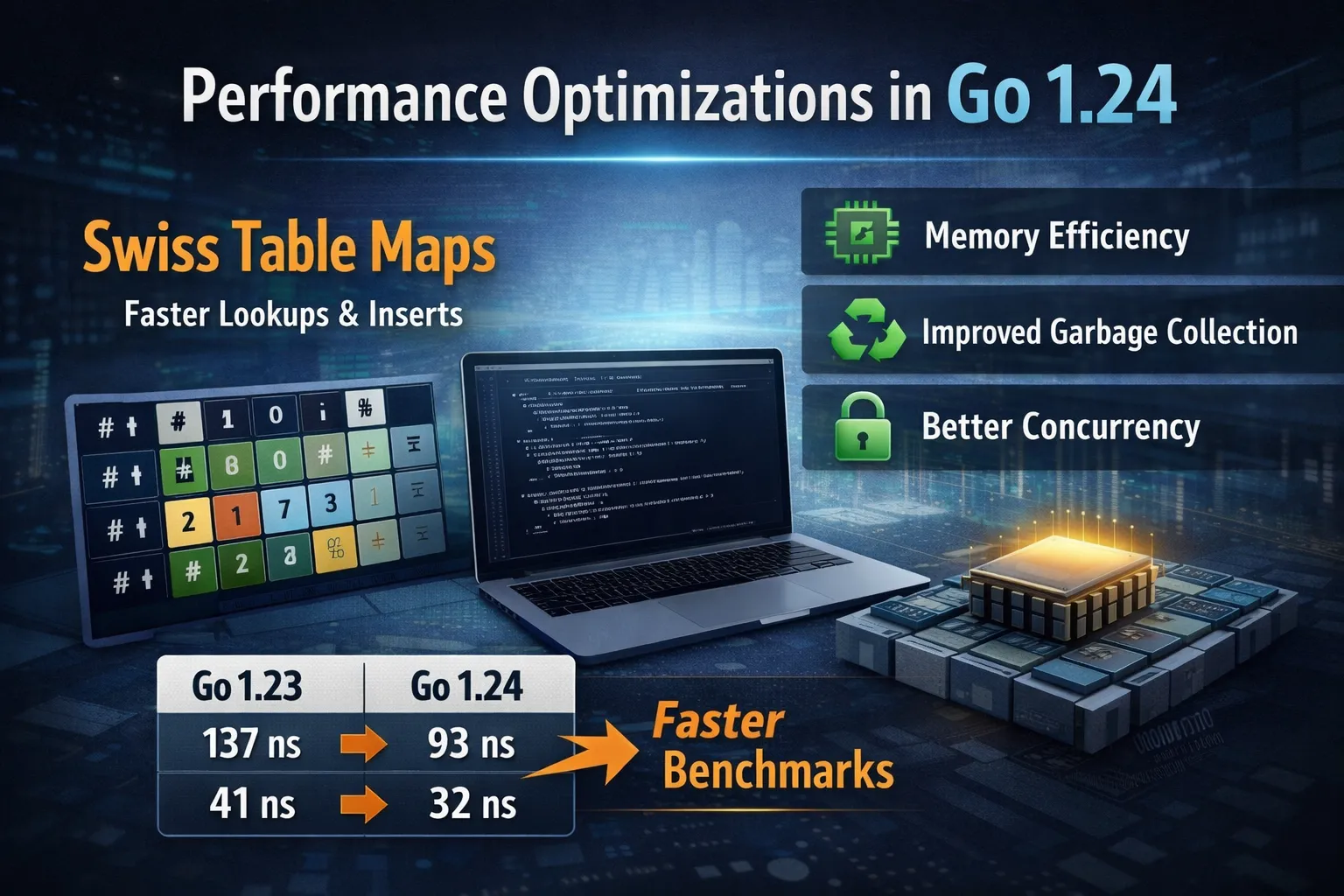

- Low adoption + always-on infrastructure = poor ROI: Given that initial adoption is likely to be low, how does one justify the operational cost of compute and storage resources—particularly for vector or hybrid (vector + lexical) search systems that support AI products? There are a few important cost factors to consider:

a) Traditional vector databases are relatively resource-hungry. Solutions like Turbopuffer, an object-store-backed vector database, offer multiple cost-control levers worth evaluating, such as intelligent tiered storage, serverless and stateless nodes, and smaller vector dimensions.

b) The second issue is embedding churn. Creating embeddings is compute-intensive, and when data changes, it means re-embedding. Re-embedding entire documents can become very expensive. Techniques such as chunking and hashing can help avoid full re-embedding, though other patterns may apply depending on the context. The key point is to treat updates as an important operational cost contributor.

Value compounds through contextual breadth

AI products and features can be significantly enhanced by making additional, related context sources available to agents over time. This compounds the value delivered to the users.

For example, a log analysis system based purely on logs can be augmented with troubleshooting documentation, source code, and system monitoring metrics. Agents can then be enhanced to provide more useful, situationally aware insights based on this broader context.

From conversations to human-verified action

Ultimately, the trajectory of enterprise AI products should not stop at conversational interfaces, but progress toward human-verified action. This transition requires explicit attention to trust, verification, and responsibility—areas that cannot be bolted on later.

As agents begin to suggest or execute actions (such as creating tickets, modifying configurations, or triggering workflows), systems must be designed with clear confidence thresholds, auditable reasoning, and well-defined human approval points. When designed deliberately, this shift—from answers to assisted action—represents the real business leverage of enterprise AI.